How Google Knows What Sites You Control And Why it Matters - Whiteboard Friday |

| How Google Knows What Sites You Control And Why it Matters - Whiteboard Friday Posted: 01 May 2014 05:09 PM PDT Posted by Cyrus-Shepard Google obviously looks at a great many factors in determining site rankings, but what do they know about us as administrators of our sites, and how do they use that information? In today's Whiteboard Friday, Cyrus talks about some of the actions that Google takes based on information it can see, offering advice on how you can see the inherent benefits and avoid the pitfalls. For reference, here's a still of this week's whiteboard! Howdy Moz fans. Welcome to another edition of Whiteboard Friday. My name is Cyrus. Today we're going to be talking about how Google knows what sites you control and why it matters. How much does Google know about the sites you own? Can that be used to your advantage? Can it be used to hurt you? These are important questions that webmasters often ask. Now technically, when there are relationships between websites, when you own websites, you have a lot of websites you control, this is traditionally known as an administrative relationship, meaning that you are an administrator. You can control the links on the site. You control the content of the site. Maybe these are sub-domains that you own. Maybe these are multiple properties within your business. But Google, over the past decade or so, has spent an incredible amount of energy trying to figure out administrative relationships between websites, both to help you and sometimes to discount links between those sites or potentially some negative consequences. The reason that this is so important to Google is because, if you think about the link graph and relationships between sites, links between sites that are controlled by the same person probably shouldn't count as much as links that are editorial and controlled by other people, because when you get links, you want them to be natural and not something that you control. The Good Side of Related WebsitesBut other times, Google wants to reward you for links that are related to one another. There are some definite advantages to establishing those relationships between sites that you own, and sometimes you want to tell Google that you own multiple sites. One example is to distribute the authority between those sites. Now a perfect example is something like eBay. eBay has a site in the United States, and they open a brand new site in Ecuador. They want that Ecuador site to rank well, but they don't want to start over. They've already built up their American site so much. They want to transfer some of that link equity. So they want to let Google know that, "Hey, this is eBay. This is us. This should be an authoritative website." This also works on a much smaller scale too sometimes, often on sub-domains. You see a lot of blogs being started on subdomains' websites because it's easier from a development point of view or for whatever the reason. You want that sub-domain, that blog to have the same authority as your main site. Now it's oftentimes up to Google whether or not they give that authority to your blog or your sub-domain. But if you can give them signals to tell them, "Yes, this is associated with my main domain," that often goes a long way in helping that sub-domain to rank. Same with alternate languages. You have French content. You have Spanish content. You have English content. They're all on your site. Maybe they're on a different sub-domain or a different top level domain, but you want Google to know that they have the same authority as your main site that you worked so long to build up. Also, we're starting to see identity play a role in administrative relationships, more at a page level with things like Google Authorship and things like that. But identity is becoming a big issue, and Google is working to figure out those identities on the web. Negative Side Effects of Related WebsitesThen there's the flip side, the bad side of administrative relationships. That's traditionally what SEOs and webmasters have been dealing with when they think about these things. The biggest problem is diminished link equity. Again, that problem of Google seeing that you control these sites, why should they pass as much link equity as sites that you don't control? So a lot of black-hat SEOs and gray-hat SEOs go to great lengths to hide their relationships between sites, because they don't want Google to discount that link equity. Also, there's the idea of link schemes in bad neighborhoods. If there are 12 sites, and they're all interlinking to each other, that might be a pretty good signal to Google that it's sort of a link scheme and those links shouldn't count, or they could be penalized. Finally, we're seeing a new phenomenon in Google: penalties following people around the web. These are instances where people are penalized. They burn their site to the ground. They're so frustrated. They decide to just start over on a completely new domain. But when they do so, ironically, amazingly, they find the penalty transferring to that new domain, even though they've cut all the backlinks. They've changed the URL, everything. How does Google know that that's the same site? So these are important questions to ask yourself and help determine: Can you be helped by establishing these relationships between sites, or can you be hurt? If you understand some of the signals Google is using, you can take advantage of this. Potential Signals of Related SitesNow one thing I want to emphasize is we don't know all the signals. We have a few clues. Traditionally, Google has been looking at things like ownership, WhoIs records, very freely available on the Internet, where your site is hosted, the IP address, things like that. Elijah, what's the name of that website that we go to, to check who owns what? Elijah: SpyOnWeb? Cyrus: That's right. SpyOnWeb. Here's a simple experiment you can do. Go to SpyOnWeb.com. Type in a very common domain, like Moz.com. You can see all the relationships that we have, Moz, with all these sites that we either own or hosted on the same IP or same Google Analytics code or the same AdSense code. All this information is publicly available on the web. You don't need access to your Google Analytics account or your AdSense account. It's all there in the source codes of the websites. By scraping the web and gathering all this information together, you can create a web of ownership that's pretty easy to dissect. Traditionally, C-blocks have been an indication of relationships on the web. It's something, at Moz here, we report in Open Site Explorer, number of unique linking C-blocks. Right now though, we are in a transition with this, where the web is moving to a new Internet Protocol version, Version 6 (IPv6). The old C-block was based on Version 4. So C-blocks, it's actually going away, and the engineers here at Moz, working with some very smart people in the consulting world, such as Distilled, we're figuring out some new standards to report instead of C-blocks because we're losing these very soon. Also, link patterns, when you have, again, a lot of sites linking to each other, and Google has a complete catalog of links or the most complete catalog of links on the web, when you take all this together, using various statistical analysis methods, you can determine pretty closely who's associated with what, who has control over what. These are all things that people are looking at, all that publicly identifiable information. Some signals that people don't often consider are what I would call soft signals or content signals. These are more advanced signals that people don't actually always think about, but things that Google could look at, that we've seen them talk about in patent papers, are things like when two sites have identical or similar content, meaning content on Site A is the same as content on Site B. This would be a strong clue to Google that it may be the same site. They would probably look for a few of these other things, such as who has registration or analytics code or something like that, because a lot of sites get scraped. It's not a very clear signal. Formatting, CSS, you'll often see sites that are owned by the same individual use a lot of the same WordPress templates, for example, or a lot of the same CSS files or JavaScript files. Again, by themselves, this is not a definitive clue because there's a lot of templates out there, a lot of free stuff floating around the Internet. But when combined with the other signals, it can create a very, very clear indication of those relationships. Even something as simple as the contact details on your About Us page, if those are the same from site to site, it can be very clear that these sites are related. Then on the page level, we have things like authorship. I've seen this work really well with in-depth articles, certain authors. This isn't a domain level signal, but more of a page level signal that can help individual pages to rank. For content and language signals, the hreflang. Again, this is when you have sites in different countries, different languages, using this attribute can help establish those relationships to help you to rank. So in general, it's very hard to hide these relationships from Google, because they have so much data available, and it's really not worth it. But oftentimes it is worth it in the cases of sub-domains, alternate languages, authorship, that you want to help boost these signals. Understanding how these all work can give you clues as to why you're ranking, why you're not, and sometimes what you can do to help. That's all for today. Thanks, everybody. Bye-bye. Video transcription by Speechpad.com Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! |

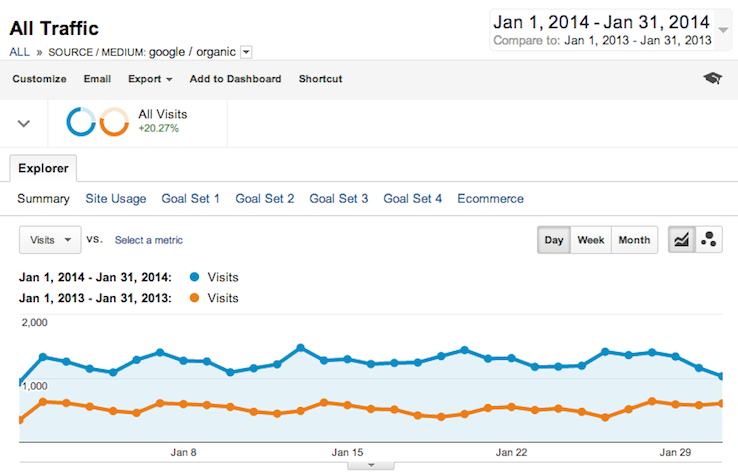

| Increasing Search Traffic By 20,000 Visitors Per Month Without Full CMS Access - Here's How... Posted: 01 May 2014 03:49 AM PDT Posted by RoryT11 This post was originally in YouMoz, and was promoted to the main blog because it provides great value and interest to our community. The author's views are entirely his or her own and may not reflect the views of Moz, Inc. Trying to do SEO for a website without full access to its CMS is like trying to win a sword fight with one hand tied behind your back. You can still use your weapon, but there is always going to be a limit to what you can do. Before this metaphor gets any further out of hand, I should explain. One year ago, the agency I work for was asked to run an SEO campaign for a client. The catch was, it would be impossible for us to gain full access to the CMS that the website was built on. Initially I was doubtful about the results that could be achieved. Why no CMS?The reason we couldn't access the CMS is that the client was part of a global group. All sites within this group were centrally controlled on a third-party CMS, based in another country. If we did want to make any 'technical changes', it would have to go through a painfully slow helpdesk process. We could still add and remove content, edit Metadata and had some basic control over the navigation. Despite this, we took on the challenge. We already had a strong relationship with the client because we handled their PR, and a good understanding of their niche and target audience. With this in mind, we were confident that we could improve the site in a number of ways that would enhance user experience, which we hoped would lead to increased visibility in the SERPs. What has happened in the last year since we started managing the search marketing campaign has emphasised to me just how important it is to implement well-structured on-page SEO. The client's website is now receiving over 20,000 more visits from organic search per month than it did when we took over the account. I want to share with you how we achieved this without having full access to the CMS. The following screenshots are a direct comparison of January 2013 and January 2014. Corresponding figures can be viewed in the summary at the end of the post. AnalyticsWhen we were granted access to analytics for the website, we got our first real insight into how the site was performing, and what we could do to help it perform better. By analysing the way visitors were using the site (visitor journeys, drop-off points, most visited pages, which pages had highest avg. time etc.), we could start to structure our on-page strategy. We identified how we could streamline the navigation to help people find what they were looking for quicker. We also decided it was necessary to create clearer call-to-actions, which would shorten the distance from popular landing pages, to the most valuable pages on the website. We also looked at the top landing pages, and with what keyword data we had access to, we were able to define more clearly why people were visiting the site, and what they expected when they landed on a page. For example, the site was receiving a lot of traffic for one of its products, with visitors coming into the site from a range of relevant short and longtail keywords. However, they would almost always land on the product page. We noticed by analysing visitor journeys from this page that they would leave to try to find more information on the item, because the majority of visitors weren't entering the site at the buying stage of the conversion cycle. However, where this supporting information lived on the site wasn't immediately obvious. In fact, it was nearly four clicks away from the product landing page! It was obvious we'd have to address this, and other similar issues we identified simply by conducting some fairly simple analytic analysis. Product Pages The product pages were generated from a global product catalogue built into the content management system. They aren't great, but because we didn't have access to the catalogue or the CMS, there was not much we could do directly to the product pages. Rewriting contentI don't necessarily believe that there is such a thing as 'writing for SEO'. Yes, you can structure a page in a certain formulaic way with keywords in header tags, alt tags and title tags. You can factor low-competition longtail phrases and target keywords into the copy as well…but if you sacrifice UX in favour of anything that I've just mentioned, then I'll just be honest, you're doing it wrong. From looking at the data in Google Analytics (low avg. time on site and a bounce rate that should have been lower), and reading through the website ourselves, it became clear that the content needed to be rewritten. We did have a list of target keywords, but our main objective was to make the content more valuable to the users. To do this, we worked closely with the PR team, who had a great understanding of the client's products and key messages. They had also developed personas about the type of visitor that would come to the client's site. We were able to use this knowledge as a foundation to rewrite, restructure and streamline sections of the website that we knew could be performing better. Another thing we noticed from analysing the content is that interlinking was almost non-existent. If a visitor wanted to get to another piece of information or section of the website, they'd be restricted to using the main navigation bar. Not good... We addressed this in the rewriting process by keeping a spreadsheet of what we were writing and key themes in those pages. We could then use this to structure interlinking on the website in a way that would direct visitors easily to the most relevant resources. As a result of this we have seen time on site increase by 14.61% for visitors from organic search: Working with the PR teamAs I have mentioned, we also handled PR for this client. Luckily, the PR team provided brilliant support to the search marketing side of the account. This has proved integral to the success of this campaign for two reasons: 1) The PR team know the client better than anyone. It might even be fair to say they know more about the products and target audience than the client's own marketing team. This helped us build a firm understanding of why people would come to the site, what they'd expect to see, and what the client wanted to achieve with its web presence. This was great in terms of helping us identify what people would search for to find the site, which in turn allowed us to structure the content rewrite more effectively. 2) By working with the PR team, we were able to co-ordinate the on-page and off-page work we were doing, to align with PR campaigns. For example, if they were pushing a certain product, or raising awareness of a specific campaign, we knew we'd see an increase in search volume in those areas. The SEO team would then also focus efforts on promoting the same product. When the search volume increased, our site was there to capture the traffic. Unlike in the previous example when the traffic was sent to a product page, we were able to create a fully optimised landing page. With this approach we knew we'd get a good volume of targeted traffic - we just needed to be there to capture it and give a friendly nudge in the right direction. Restructuring navigationThe main navigation menu on the site proved to be a source of great frustration. Functionality was extremely limited...we couldn't even create dropdown menus as that wasn't built into the CMS. That meant we needed to be really tight with our navigation options, as well as making it obvious where each navigation link would lead. Again, we worked with the PR team and the client, as well as using information from Google Analytics to learn about how visitors were using the site, and how the client wanted them to use the site. Armed with this information, we streamlined the navigation to support user experience by creating better landing pages for the navigation links and making the most popular and valuable pages of the website more accessible. The result has been that although people are spending more time on page than 12 months ago, they are visiting fewer pages. This has helped us inform the client that navigation was working better, and visitors were able to find the information they required more easily: Valuable contentThere's a vicious rumour circulating at the moment that quality content (no... not 300 word blog posts) can help drive SEO success. Well, we decided to test this for ourselves… As well as rewriting existing copy, we also created new content that we hoped would drive more organic search traffic to the site. We created infographics (good ones), product-specific and general FAQs, video and text based tips and advice pages, as well as specific landing pages for the client's three 'hero' products. We knew from looking at the analytics that there was definitely opportunity to get more longtail traffic, but we wanted to combine this with creating a genuinely useful resource for the visitors. Nothing we did was hugely resource intensive in terms of content creation, but what we did create was driven by what the data told us people wanted to see. As a result, the tips and advice pages and FAQs have both pulled in significant volumes of organic search traffic, and given users something of value. The screenshots below illustrating this are taken from the middle of August 2013, when the pages went live, to the end of January 2014: Fixing ErrorsWith the site plugged into Moz, we were pretty shocked to see the crawl diagnostics return 825 errors, 901 warning and 976 notices. This equated to almost one warning and one error on every single page on the site. The biggest culprit being duplicate page titles, duplicate page content and missing or non-existent Metatags. The good news – I got to spend tonnes time doing what every SEO hates loves – handcrafting new metadata! The bad news – the majority of errors were caused by the CMS. How it dealt with pagination, the poor integration of the product catalogue and the way it handled non-public (protected) pages. As part of our initial audit on the site, we noticed the site didn't even have a robots.txt. As you know, this meant the search engine bots were crawling every nook and cranny, getting in places that they had no business going in. So, as well as manually crafting new metadata for many pages, we also had to try and get a robots.txt that we had written onto the site. This meant going through a helpdesk, where they didn't understand SEO and where English wasn't their first language. A gruelling process – but after several months of trying, we got that robots.txt in place, making the site a lot more crawler friendly. Now we're down to 122 errors and 377 warnings. Okay, I know it should be lower than that, but when you can't get change how the CMS works, or add functionality to it, you do the best you can. ConversionsThe client does not sell directly through its website, but through a network of distributors. The quickest way for a customer to learn about their closest distributor is to use the 'Contact Us' page. Again, admittedly, this is far from the best system but unfortunately, it is not something we're able to change at this stage. Because of this, we made people visiting the 'Contact Us' page a conversion goal that would be a KPI for the campaign. We have seen this increase by over 21% in the last 12 months, which has helped us prove value to the client, as these are the kinds of visits that will have a positive impact on their bottom line. It's good to know you're not only driving a high volume of traffic, but also a good quality of traffic. Off-pageThe reason I've saved off-page to last is that I really don't dwell on it. Yes, we did follow traditional 'best practices'; blogger and influencer outreach, producing quality content for people to link to – but we didn't do anything revolutionary or game-changing. The truth is, we had so much work to do on-page, that we kind of let the off-page take care of itself. I'd in no way advocate this approach all the time, but in this case we prioritised getting the website working as hard as it could. In this case, it paid dividends and I'll tell you why. Conclusions - Play to your strengths Managing an SEO campaign without full access to a CMS undoubtedly poses a unique set of challenges. But what it also forced us to do was play to our strengths. Instead of overcomplicating any of the more 'technical' SEO issues, we focused on getting the basics right, and using data to structure our strategy. We took an unfocused, poorly structured website, and shaped into something valuable and user-friendly. That's why we've seen 20,000 more unique visits per month than we were having when we took over the campaign a year ago – we did what many people would consider 'basic SEO' really well. I think this is what I want the key takeaway to be from this case study. It's probably true that SEOs are experiencing something of an identity crisis, but as Rand eloquently argued in his recent post, we still have a unique skill set that can be incredibly valuable to any business with an online presence. What we may consider 'basic' still has the potential to deliver fantastic results. Really, all we're trying to do is make our websites more user-friendly and more crawlable. If you do that, you'll get the results. Hopefully that's what I've illustrated in this post. Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don't have time to hunt down but want to read! |

| You are subscribed to email updates from Moz Blog To stop receiving these emails, you may unsubscribe now. | Email delivery powered by Google |

| Google Inc., 20 West Kinzie, Chicago IL USA 60610 | |

Niciun comentariu:

Trimiteți un comentariu